About Evaluations

Oliver Knill, January 26, 2022

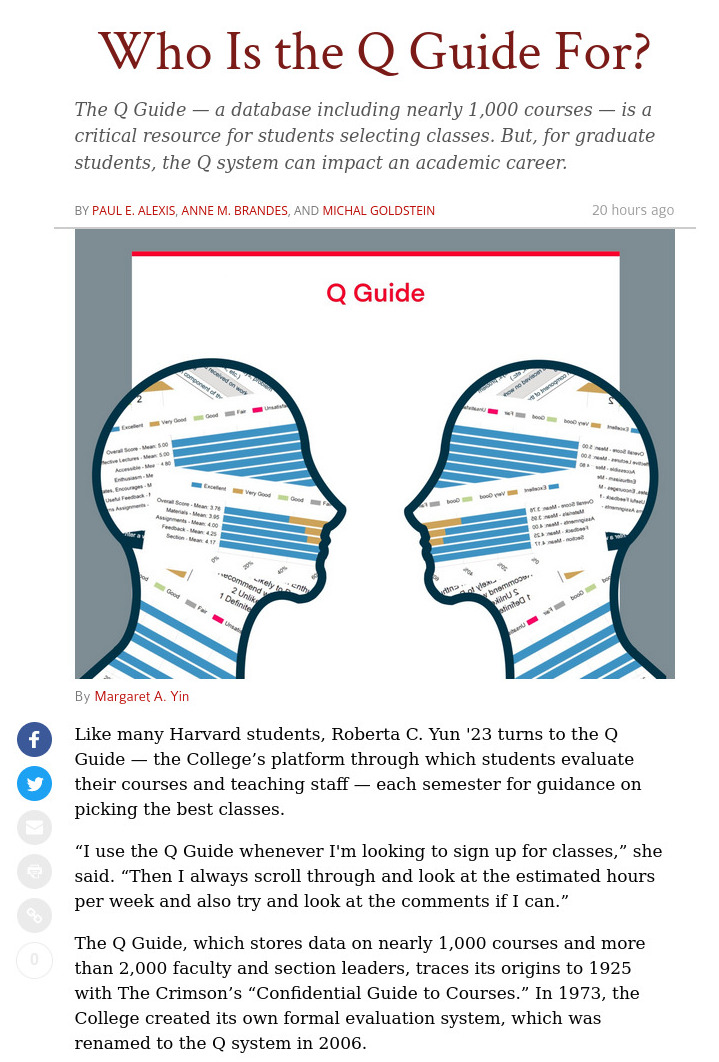

From the Crimson article

by Paul E. Alexis, Anne M. Brandes, and Michal Goldstein:

|

Mathematics preceptor Oliver R. Knill wrote that he believes there is

a danger of positive bias for teaching staff of certain identities.

"It is not only gender and race but also origin, age, title or look.

This again shows how important it is to evaluate using different

channels. Bias can be positive or negative," he wrote. "The famous Dr.

Fox experiment, where an actor gives a non-sense talk to medical doctors,

shows that also positive bias can happen."

"Dr. Fox was introduced and dressed like an expert and got stellar

evaluations, even though everything was garbage," he added. "They were

blinded by look, manner and initial third party praise."

|

The questions

Here were the questions and my electronically submitted answers:

What's the role of the Q Guide for you as preceptor in math? How do you make use of it?

Feedback is extremely important. For me personally, the free response answers are

the most valuable. For my own evaluations, I appreciate thankfully the positive ones, I also

look at constructive critique and see what can be improved and done better. Non-constructive critique,

I learned to ignore because there is nothing one can do about comments like "He has a Swiss accent".

But the Q responses are also helpful, when writing letters of recommendations.

What impact do you believe it has for preceptors generally and graduate students?

The Q guide is much better than in the past. I'm working at the department since 2000 and

initially, there was eventually a numerical number attached to each teacher.

The ``evaluate overall" parameter was used as a yard stick. I have seen graduate students

as well as faculty being evaluated well in all aspects but then were hammered in that ``evaluate overall"

question. Evaluation should not be a beauty contest. It should be illuminate as many sides of

the teacher as possible.

Is that the appropriate amount of weight the Guide should hold in their career?

It can be relevant for letters of recommendation. But multiple evaluation channels need to be used.

When I evaluate a teacher, I can do that best by sitting in the classroom. Sub optimal but still

possible is to watch a taped lecture. The Q guides free responses give valuable information too,

but it ignores for example how well a course is organized or how the teaching material

is selected, whether it is original, relevant or inspiring and whether it motivates.

What role does the Q Guide play when you search for jobs? If you haven't searched for other jobs, what

role have you heard that it plays for your colleagues in their job search?

It has been a good source to add quotes in a teaching portfolio. For writers of letters of recommendation,

it is one of the sources which help with the evaluation. But this should only be one factor in

evaluating a teacher. I have seen excellent teachers which - maybe due to humility or personality not

wanting to ``show off" - did not do well on the Q, while average teachers who can market and sell

themselves scored better with the Q. I recommend everybody who is on the market to get some

exposure also elsewhere like in conferences or post recorded talks on youtube so that employers can

see first hand, how well the presentations are, how clear the person argues, illustrates or motivates.

What role if any do you think bias (i.e. racial or gender) plays in student evaluations?

Bias is a big factor in evaluations. It is always underestimated. The excellent Hollywood movie

"Moneyball" with Brad Pitt and Jonah Hill illustrates this well: baseball players were dismissed because

they ``throw funny". The movie is a good metaphor as also there, it was important, using statistics

to evaluate various factors in order to evaluate a baseball player. This is the same for teachers.

It is not only gender and race but also origin, age, title or look. This again shows how important it is

to evaluate using different channels. Bias can be positive or negative. We usually talk about negative

bias, but there is also positive bias: the famous Dr Fox experiment, where an actor gives a non-sense talk to

medical doctors shows that also positive bias can happen. Dr Fox was introduced and dressed like an expert

and got stellar evaluations even so everything was garbage. The talk about game theory was total gibberish.

The doctors were not idiots, but they were blinded by look, manner and initial third party praise.

[P.S. I myself am particularly interested in historical cases, where mathematicians were evaluated low by peers and

had even difficulty finding jobs but later turned out to of huge influence. Max Dehn in Mathematics was such

a person. Or then most work which was ranked highly turns out to be rather irrelevant while some of the most

astounding works never would have made it through a referee evaluation process. Evaluation is hard. Amusing stories

like the Sokal affair illustrate this. Some journals have started to remove author and institution names

(when sending to referees) in order to bypass bias. ]

What changes would you make to improve its current format? What would you like to see added?

Evaluation has to be clear and short. It needs to be doable in reasonable time. Participation rate

has become a big problem since it went online in 2005. Some instructors recently started to game the Q system

in that they force their students to do the Q evaluation in class. I myself consider that cheating, because the

responses when being present in person are generally better. (Until 2005, the CUE guide was done

on paper and was done in class. It had been more valuable then as all students gave feedback).

The Q, as of today is good but looks unorganized. It should evaluate different aspects and then

average the score. Get rid of the "overall" as this is where bias comes in most. So:

1) Evaluation should be mandatory. A student only gets the grade of courses which were evaluated.

2) Evaluation need to evaluate different aspect. Simplicity rules. Example: Answer with Yes or No:

- was the course well enough organized?

- was the material relevant?

- was the material choice inspiring?

- was there a support system in place?

- did you learn enough for your concentration?

- were the exams or projects and homework fair?

- did your instructor know the material well?

- was your instructor approachable?

- was your instructor inspiring?

- did you get enough and timely feedback?

Then there should be one free response box where students can tell whatever they like.

Either gripe or praise.

|

Added February 2, 2022:

- About getting feedback: we all are very busy.

Also students are very busy. Don't make the evaluation process too

tedious. I mention this because I just got yesterday a link from the

AMS (American Mathematical Society) with a feedback poll. It was some

sort of evaluation. They try to figure out what works and what does not

work which is a good thing. Also the questions were quite good. But there

were so many questions and the questionnaire long that I had to give up:

after 10 minutes answering page long questions, I was 30 percent through

and bailed out. I have also other things to do. Now, it is like with the Q, if you make the

questionnaire in such a way that it takes an hour to complete, most are not going

to do it. The most important thing about evaluation is that you get

feedback from a substantial cross section of the members, not only from the extreme

cases, which are either extremely happy or extremely unhappy. The same of course

applies to voting: if the process to vote is done a difficult task, only the fanatics

are going to do it.

- More than 15 years ago, I saw an excellent talk at the Business school.

I'm still sorry, that I did not write down, who the faculty was who gave the presentation. But it

addressed evaluation. The talk was about feedback and the strange thing that many businesses listen

carefully to the top 10 percent (the fans of the product) and to the bottom

10 percent (the complaints) but they hardly listen to the middle 80 percent (the silent majority).

When doing evaluation, we often just look at anecdotal evidence of a few fans

or then listen to some complaints. What counts however are the mostly invisible regular customers.

Listening to the extreme cases can distort evaluation (both on the positive as well as on

the negative side).

Evaluations like the Q help to get a larger picture but there is still the danger that the

process of giving evaluation is made so tedious and hard that many don't do it. I often

have given up filling out a feedback form, if it was too long.

[There is of course some reason why so much insight comes from the business school.

In business, evaluation is extremely important. Businesses want their

products to succeed. They like to know what the market demands,

deliver the right stuff and quickly correct possible mistakes.

Businesses can not afford BS, they can not live with dogmas. What counts is what sells.

In education, certain dogmas are repeated and used despite the fact that

the dogmas have not worked out. (An example is the saga about memory and training

[like "you do not want to memorize you want to understand" as if understanding can grow

without knowledge. There is a word for not knowing: Ignoramus and it is even in colloquial

language tied with stupidity.] But I'm not going to open that Pandora box.)

Of course, education is not only business. The most

cost effective way to teach would be to get rid completely of all teachers and let

students learn on their own. Well, this might actually happen eventually, if it should

work. So far, the attempts to do so have been a disaster. It seems to work for a few

(who are then very vocal about it) adding to anecdotal evidence that you can

teach yourself. ]

|